SequentialLR#

- class torch.optim.lr_scheduler.SequentialLR(optimizer, schedulers, milestones, last_epoch=-1)[source]#

包含一個排程器列表,這些排程器將在最佳化過程中按順序呼叫。

具體來說,排程器將根據里程碑點進行呼叫,這些里程碑點應提供每個排程器在給定 epoch 被呼叫時的確切間隔。

- 引數

示例

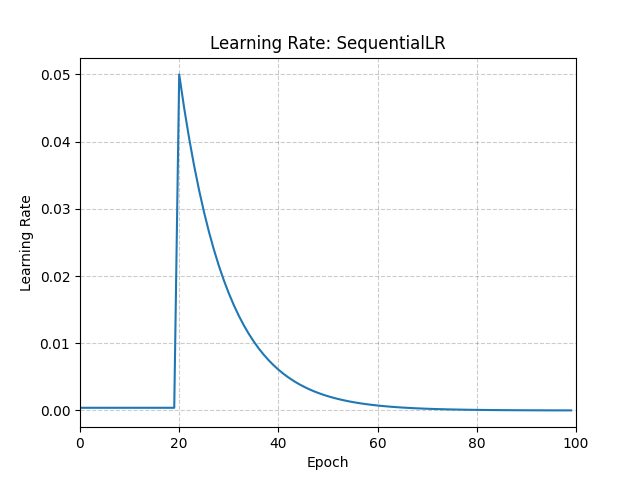

>>> # Assuming optimizer uses lr = 0.05 for all groups >>> # lr = 0.005 if epoch == 0 >>> # lr = 0.005 if epoch == 1 >>> # lr = 0.005 if epoch == 2 >>> # ... >>> # lr = 0.05 if epoch == 20 >>> # lr = 0.045 if epoch == 21 >>> # lr = 0.0405 if epoch == 22 >>> scheduler1 = ConstantLR(optimizer, factor=0.1, total_iters=20) >>> scheduler2 = ExponentialLR(optimizer, gamma=0.9) >>> scheduler = SequentialLR( ... optimizer, ... schedulers=[scheduler1, scheduler2], ... milestones=[20], ... ) >>> for epoch in range(100): >>> train(...) >>> validate(...) >>> scheduler.step()

- load_state_dict(state_dict)[source]#

載入排程器的狀態。

- 引數

state_dict (dict) – 排程器狀態。應該是一個從呼叫

state_dict()返回的物件。